Mail Service Components

The mail service on any mail server has three components:

Mail user agent (MUA): this component that the user sees and interacts with like Thunderbird and Microsoft Outlook, these user agents are responsible for reading mail and allowing you to compose mail.

Mail transport agent (MTA): this component is responsible for getting the mail from one site to another like Sendmail and Postfix.

Mail delivery agent (MDA): this component is responsible for distributing received messages on the local machine to the appropriate user mailbox like postfix-maildrop and Procmail.

Setup Email Server

We chose Postfix mail server, which is very popular and common among system administrators today.

Postfix is the default mail server on most modern Linux distros.

First, check if it is installed on your system or not:

rpm -qa | grep postfix

If not installed, you can install Postfix mail server on Red Hat based distros like this:

dnf -y install postfix

Then start the postfix service and enable it on system startup:

systemctl start postfix

systemctl enable postfix

On Debian based distros like Ubuntu, you can install it like this:

apt-get -y install postfix

You will be prompted to select your Postfix mail server configuration type during the installation process.

Among the four choices No configuration, Internet site, Internet with smarthost, Satellite system and Local only, we will choose No configuration option.

Configure Linux Mail Server

After installing the Postfix mail server, you will need to configure it, most of its configuration files can be found under the /etc/postfix/ directory.

You can find the main configuration for Postfix mail server in /etc/postfix/main.cf file.

This file contains a lot of options like:

myhostname

This option is used for specifying the hostname of the mail server. This is the Internet hostname which Postfix will receive emails on it.

The hostnames could be like mail.example.com, smtp.example.com.

It is written like this:

myhostname = mail.example.com

mydomain

This option is the mail domain that you will be servicing, like example.com

The syntax is like this:

mydomain = example.com

myorigin

All emails sent from this mail server will look as though it came from this option. You can set this to $mydomain value.

myorigin = $mydomain

You can use any option value, just precede it with a $ like $mydomain.

mydestination

This option lists the domains that the Postfix server uses for incoming emails.

It can take values like this:

mydestination = $myhostname, localhost.$mydomain, $mydomain, mail.$mydomain, www.$mydomain

mail_spool_directory

There are two modes of delivery that Postfix mail server can use:

- Directly to a user’s mailbox.

- To a central spool directory, this way, the mail will be in /var/spool/mail with a file for each user.

mail_spool_directory = /var/spool/mail

mynetworks

This option allows you to configure what servers can relay through your Postfix server.

This option should take local addresses like local mail scripts on your server only.

Otherwise, spammers can utilize your mail server to relay their messages and your mail server blacklisted and as a result, you will not be able to receive many emails.

This option has the following syntax:

mynetworks = 127.0.0.0/8, 192.168.1.0/24

smtpd_banner

This variable sets the message that is sent when the client after successful connection.

It is better to change the banner to something that doesn’t give an indication about the server you are using.

inet_protocols

This option specifies the IP protocol version used for server connections.

inet_protocols = ipv4

If you change the configuration files for Postfix mail server, you need to reload the service:

systemctl reload postfix

When you type any configuration, you may make a mistake, you can check for errors using the following command:

postfix check

This tool will help you find exactly the line and the error so you can fix it.

Checking the Mail Queue

Sometimes the mail queues on your system are filled up. This can be caused by many reasons like network failure or any reason that can delay mail delivery.

To check the mail queue on your Linux mail server, use the following command:

mailq

This command shows the Postfix mail queue.

If your queue is filled up and the message takes several hours to be sent, then you should flush the mail queue.

postfix flush

Now, if you check your mail queue you should find it empty.

Test Linux Mail Server

After configuring Postfix mail server correctly, you should test your mail server.

The first step is to use a local mail user agent like mailx or mail which is a symlink to mailx.

Try to send a mail to someone else on the same server, if this works, then send to a remote site.

echo "This is message body" | mailx -s "This is Subject" -r "likegeeks<[email protected]>" -a /path/to/attachment [email protected]

Then try to receive a mail from a remote site.

If you have any problems, check the logs. The log file on Red Hat based distros in /var/log/maillog file and on Debian based distros in /var/log/mail.log file or as defined in the rsyslogd configuration.

I recommend you to review the Linux Syslog Server for a detailed explanation about logs and how to configure the rsyslogd.

If you still have problems, try checking your DNS settings and check your MX records using Linux network commands.

Secure Mail Boxes From Spam Using SpamAssassin

One of the ways to fight spam is to scan the mailboxes by some tool, searching for certain patterns associated with spam.

One of the best solutions is SpamAssassin, which is open-source.

You can install it like this:

dnf -y install spamassassin

Then start the service and enable it at startup:

systemctl start spamassassin

systemctl enable spamassassin

Once you’ve installed it, you can check the configuration in /etc/mail/spamassassin/local.cf file.

SpamAssassin determines if an email is spam or not based on the result of the different scripts scores.

If the message has a higher score, that means a higher possibility of the mail being spam.

In the configuration file, the parameter required_hits 5 indicates that SpamAssassin will mark an email as spam if its score is five or higher.

The report_safe option takes the values 0, 1, or 2. If set to 0 means email marked as spam is sent as it is, only modifying the headers to show that it is spam.

If it takes the value 1 or 2, a new report message is generated by SpamAssassin and sent to the recipient.

If the value is 1, that means the spam message is coded as content message/rfc822, while if the value is 2, that means the message is coded as text/plain content.

The text/plain is safer since some mail clients execute message/rfc822 and could infect the client computer.

Now we need to integrate it into postfix. The simplest way to do this is probably by using procmail.

We’ll have to create a file, named /etc/procmailrc, and add the following content:

:0 hbfw

| /usr/bin/spamc

Then we edit Postfix configuration file /etc/postfix/main.cf and change mailbox_command like this:

mailbox_command = /usr/bin/procmail

Finally, restart Postfix and SpamAssassin services:

systemctl restart postfix

systemctl restart spamassassin

However, SpamAssassin sometimes does not recognize spam messages, that led to mailboxes filled with spam messages.

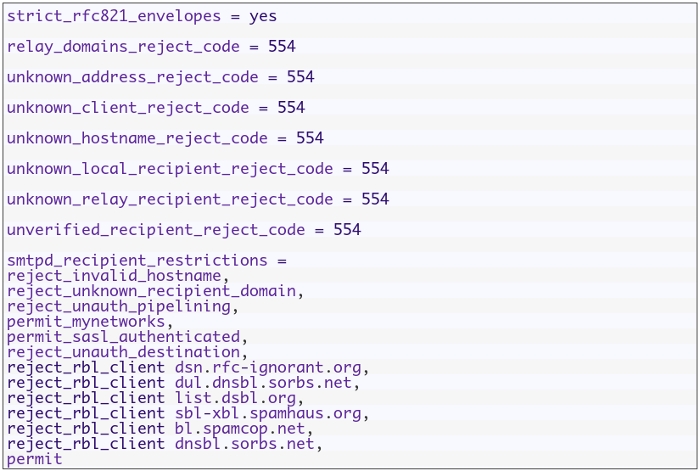

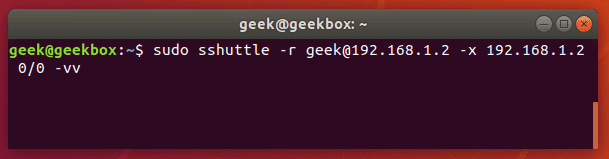

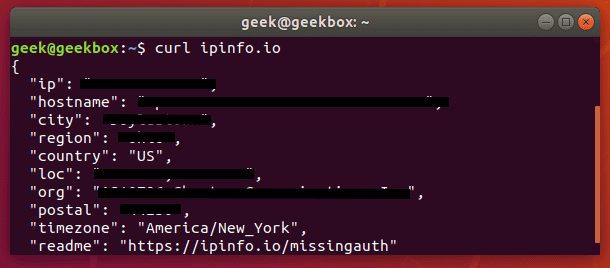

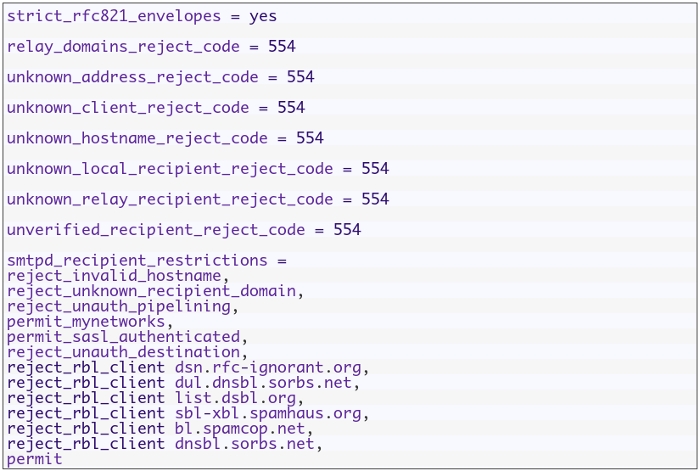

Fortunately, you can filter messages before they enter the Postfix server using Realtime Blackhole Lists (RBLs). That will decrease the load on your mail server and keep your mail server clean.

Open the configuration file of postfix server /etc/postfix/main.cf and change smtpd_recipient_restrictions option and add the following options like this:

Then restart your postfix server:

systemctl restart postfix

The above RBLs are the common ones, you can find more lists on the web and try them.

Securing SMTP Connection

It is better to transfer your SMTP traffic over TLS to protect it from being modified in the middle.

First, we need to generate the certificate and the key using openssl command:

openssl genrsa -des3 -out mail.key

openssl req -new -key mail.key -out mail.csr

cp mail.key mail.key.original

openssl rsa -in mail.key.original -out mail_secure.key

openssl x509 -req -days 365 -in mail_secure.csr -signkey mail_secure.key -out mail_secure.crt

cp mail_secure.crt /etc/postfix/

cp mail_secure.key /etc/postfix/

Then add the following option to Postfix configuration file /etc/postfix/main.cf:

smtpd_use_tls = yes

smtpd_tls_cert_file = /etc/postfix/mail_secure.crt

smtpd_tls_key_file = /etc/postfix/mail_secure.key

smtp_tls_security_level = may

Finally, restart your postfix service:

systemctl restart postfix

Now, you have to choose the TLS on your client when connecting to the server.

You will receive a warning when you send a mail the first time after changing the setting because of the certificate is not signed.

Using Let’s Encrypt Certificates

Let’s Encrypt is a free SSL certificate provider that enables you to encrypt your traffic.

Instead of using self-signed certificates which annoy your users about trusting them, you can use this good solution.

First, install letsencrypt:

yum install letsencrypt

Or if you are using Debian based distro, you can use the following command:

apt-get install letsencrypt

Then run letsencrypt like this:

letsencrypt certonly --standalone -d yourdomain.com

You should replace yourdomain.com with your actual domain.

After answering the prompted questions about the contact email, the email server domain, and the license, everything should be OK now.

The certificates will be stored in /etc/letsencrypt/live/yourdomain.com/

One last thing you have to do which is making postfix use those certificates, you can use the following commands:

sudo postconf -e 'smtpd_tls_cert_file = /etc/letsencrypt/live/yourdomain.com/fullchain.pem'

sudo postconf -e 'smtpd_tls_key_file = /etc/letsencrypt/live/yourdomain.com/privkey.pem'

Don’t forget to replace yourdomain.com with your actual domain.

Finally, restart your postfix server

systemctl restart postfix

POP3 and IMAP Protocol Basics

So far we’ve seen how SMTP mail server sends and receives emails without problems, but consider the following situations:

- Users need local copies of e-mail for offline viewing.

- mbox file format is not supported. The mbox format is used by many mail user agents like mailx and mutt.

- Users cannot stay connected to a fast network to grab a local copy to read offline.

- Some mail servers don’t give access to the shared mail spool directories for security reasons.

To handle these cases, another class of protocols was introduced. This type of protocols may be described as mail access protocols.

The most common two popular mail access protocols are Post Office Protocol (POP) and Internet Message Access Protocol (IMAP).

The idea behind POP is very simple: A central Linux mail server remains online all the time and receives and store emails for all users. All received emails are queued on the server until a user grabs them.

When a user wants to send an email, the email client relays it through the central Linux mail server via SMTP normally.

Note that the SMTP server and POP server can be on the same system without any problem. Most servers do this today.

Features like keeping a master copy of a user’s email on the server were missing, that led to the development of IMAP.

By using IMAP, your Linux mail server will support three modes of access:

- The online mode is similar to having direct file system access to the Linux mail server.

- The offline mode is similar to how POP works, where the client is disconnected from the network except when grabbing his email. In this mode, the server normally does not retain a copy of the email.

- The disconnected mode works by allowing users to keep cached copies of their emails and the server retains a copy of the email.

There are several implementations for IMAP and POP, the most popular one is Dovecot server which provides both protocols.

The POP3, POP3S, IMAP, and IMAPS listen on ports 110, 995, 143, and 993 respectively.

Installing Dovecot

Most Linux distros come with dovecot preinstalled, however, you can install dovecot in Red Hat based distros like this:

dnf -y install dovecot

On Debian based distros, the IMAP and POP3 functionality are provided in two separate packages, you can install them like this:

apt-get -y install dovecot-imapd dovecot-pop3d

You will be prompted to create self-signed certificates for using IMAP and POP3 over SSL/TLS. Select yes and enter the hostname for your system when prompted.

Then you can run the service and enable it at startup like this:

systemctl start dovecot

systemctl enable dovecot

Configure Dovecot

The main configuration file for Dovecot is /etc/dovecot/dovecot.conf file.

Some Linux distros put the configuration under /etc/dovecot/conf.d/ directory and use the include directive to include the settings in the files.

The following list is the some of the parameters used to configure dovecot:

protocols: the protocols you want to support.

protocols = imap pop3 lmtp

lmtp means local mail transfer protocol.

listen: IP addresses to listen on.

listen = *, ::

The asterisk means all ipv4 interfaces and :: means all ipv6 interfaces

userdb: user database for authenticating users.

userdb {

driver = pam

}

passdb: password database for authenticating users.

passdb {

driver = passwd

}

mail_location: this entry in /etc/dovecot/conf.d/10-mail.conf file, and it is written like this:

mail_location = mbox:~/mail:INBOX=/var/mail/%u

Dovecot comes with generic SSL certificates and key files that are used in the /etc/dovecot/conf.d/10-ssl.conf

ssl_cert = </etc/pki/dovecot/certs/dovecot.pem

ssl_key = </etc/pki/dovecot/private/dovecot.pem

When a user tries to connect to dovecot server, it will show a warning because the certificates are not signed, you can purchase a certificate from a certificate authority if you want.

Or if you go with Let’s Encrypt certificates, you can point to them instead:

ssl_cert = </etc/letsencrypt/live/yourdomain.com/fullchain.pem

ssl_key = </etc/letsencrypt/live/yourdomain.com/privkey.pem

Don’t forget to open dovecot server ports in your iptables firewall by adding iptables rules for ports 110, 995, 143, 993, 25.

Then save the rules.

Or if you are using firewalld you can do the following:

firewall-cmd --permanent --add-port=110/tcp --add-port=995/tcp

firewall-cmd --permanent --add-port=143/tcp --add-port=993/tcp

firewall-cmd --reload

And again, for troubleshooting, you check the log files /var/log/messages, /var/log/maillog, and /var/log/mail.log files.

Linux mail server is one of the easiest servers to work with, especially Postfix mail server.

I hope you find the post useful and interesting. Keep coming back.

Thank you.

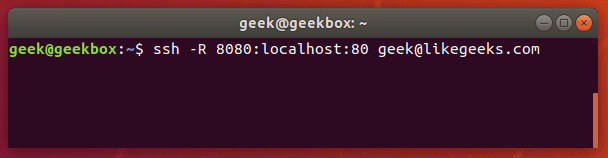

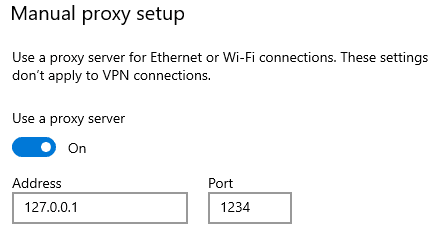

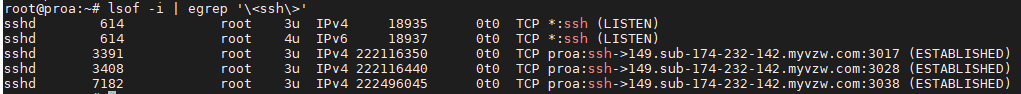

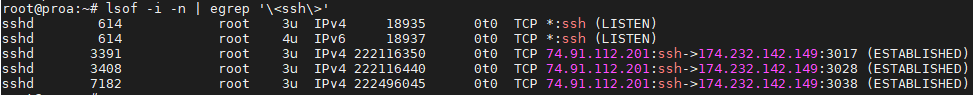

Hackers can access, steal and sell your online activity data as well as manipulate it if you don’t use the right system and tools. The level of protection you want will largely influence which tools and systems to use. With a Linux system and VPN, it becomes possible to hide your browsing tracks, personal information, and various other online activities. When you have the right protection in place, not even the government can access your activity. Keep reading to learn how businesses and individuals alike can use a Linux system and VPN for ongoing protection of their online data. We will also explore why this is important and why you should care about your online data. Hackers steal data for a number of reasons. Sometimes, it’s’ for their own purposes. Other times, they sell it or give it to other entities. These entities may or may not have known about the data collection processes the hackers use to gather the data.

Hackers can access, steal and sell your online activity data as well as manipulate it if you don’t use the right system and tools. The level of protection you want will largely influence which tools and systems to use. With a Linux system and VPN, it becomes possible to hide your browsing tracks, personal information, and various other online activities. When you have the right protection in place, not even the government can access your activity. Keep reading to learn how businesses and individuals alike can use a Linux system and VPN for ongoing protection of their online data. We will also explore why this is important and why you should care about your online data. Hackers steal data for a number of reasons. Sometimes, it’s’ for their own purposes. Other times, they sell it or give it to other entities. These entities may or may not have known about the data collection processes the hackers use to gather the data.

In this tutorial, we will cover

In this tutorial, we will cover

If you want to send or receive an email, you should have a mail server. In this post, we will discuss

If you want to send or receive an email, you should have a mail server. In this post, we will discuss

In the previous post, we talked about how to

In the previous post, we talked about how to  In the previous post we talked about some

In the previous post we talked about some